Tactile Exploration

Motivation and Objectives

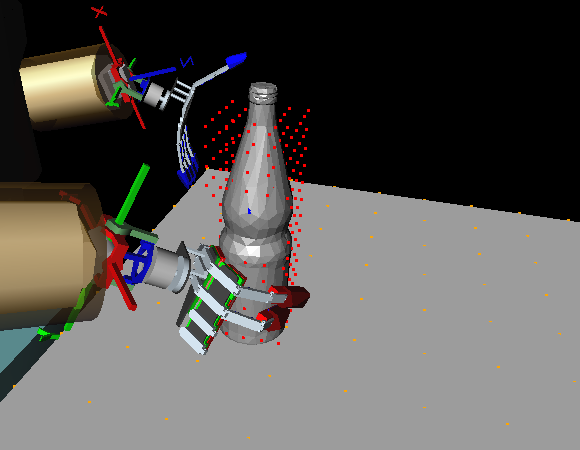

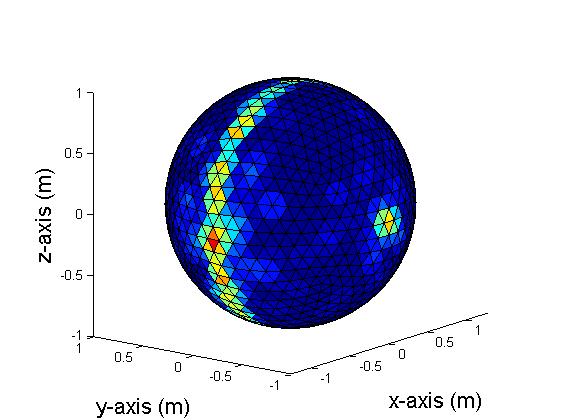

We have developed a tactile exploration strategy to guide an anthropomorphic five-fingered hand along the surface of previously unknown objects in order to generate a 3D object representation based on the acquired tactile point set. The strategy makes use of the dynamic potential field approach suggested in the context of mobile robot navigation. We have further developed a scheme for extracting grasp affordances from sparse and irregular 3D point sets as they result from tactile exploration. In this method, faces are extracted as grasping features from the acquired point set. Then, candidate grasps are validated in a four stage filtering pipeline to eliminate impossible grasps.

Object Representation

Besides grasping, there is an interest in object recognition from tactile exploration. Therefore, we have implemented a framework for visual-haptic exploration used to acquire 3D point sets from the tactile exploration process. Initially, we have chosen superquadric functions for 3D object representation and conducted experiments for fitting exploration data. In our future research, we will investigate further types of object representation suitable for object classification, recognition, and creation of grasp affordances.

Simulation Environment

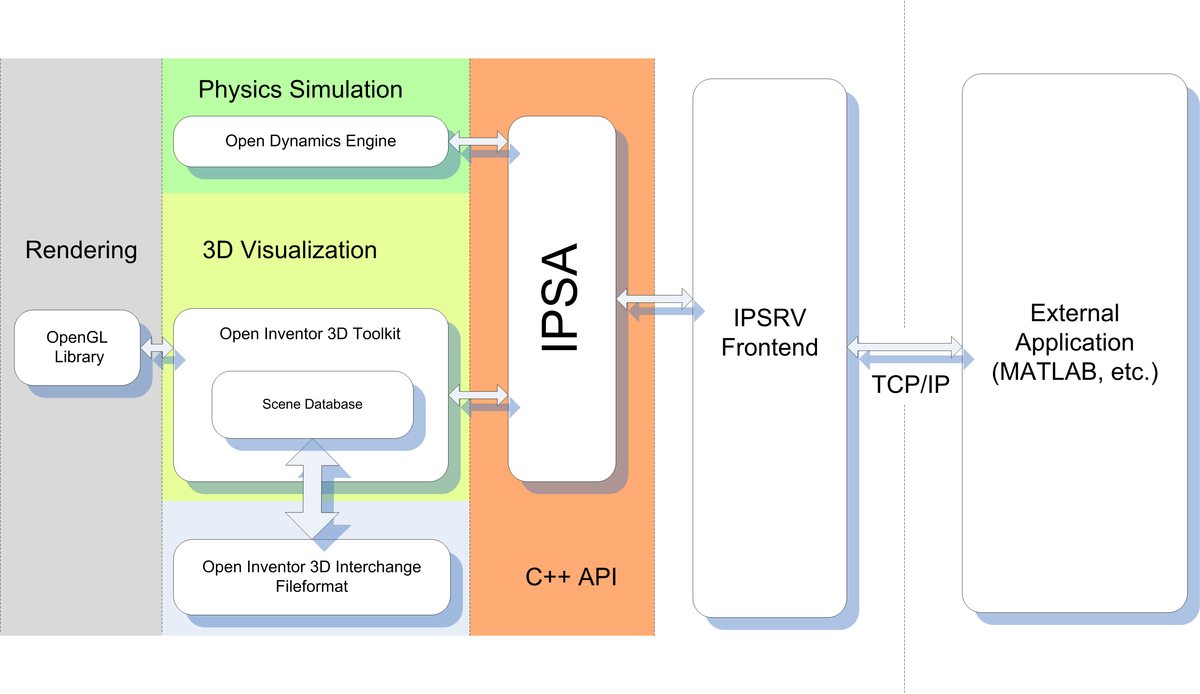

Both methods have been evaluated using the Inventor Physics API (IPSA) physics simulator which was developed specifically for the investigation and simulation of dexterous manipulation problems. IPSA is an extension of the Open Inventor toolkit objects with physical properties using the stable and sophisticated ODE library for simulating rigid body dynamics and thus allowing specification of virtual physical scenes by the user in a convenient manner.

Pneumatic Force/Position Controller

As further part of our work in multi-fingered manipulation with the humanoid robot ARMAR-III, we have developed a combined force-position controller for a pneumatic anthropomorphic robot hand. These robot hands are a promising technology for humanoid robots due to their compact size and excellent power-weight-ratio. However, actuators are difficult to control due to the inherent nonlinearities of pneumatic systems. Our control approach is based on a simplified model of the fluidic actuator providing force and position control and further fingertip contact detection. We have implemented the method on the microcontroller of the FRH-4 robot hand with a size of a human hand and 8 DoF. This hand is attached to the robot ARMAR-III and used for force-controlled operation. In latest experiments, we used the controller and its feedback from pressure and position sensors to extract global haptic features such as object size or deformability.