Position-Based Visual Servoing

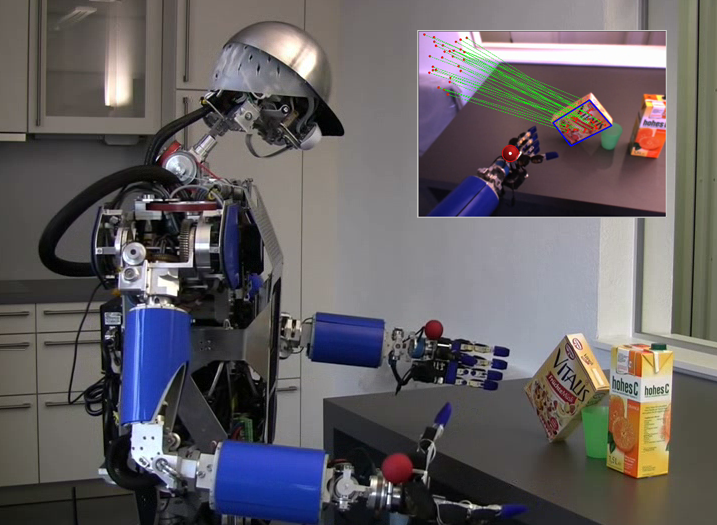

To execute reaching, grasping, or manipulating motions, the humanoid robot must be able to deal with inaccurate object localization, fuzzy sensor data, and a dynamic environment. Therefore, we use techniques related to Position-Based Visual Servoing (PBVS) to allow a robust and reactive interaction with the environment. By fusing the sensor channels coming from motors, vision, and haptics the visual servoing framework enables ARMAR-III to grasp objects and to open doors in a kitchen. Furthermore, the visual servoing algorithms are used for visually guided trajectory execution for a reliable execution of planned motions in a cluttered environment.

Dual Arm Visual Servoing

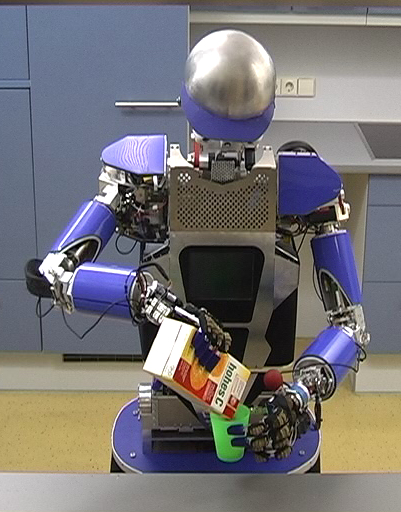

To exploit the full grasping capabilities of a humanoid robot, robust execution of bimanual grasping or manipulation motions must be possible. The bimanual visual servoing framework enables ARMAR-III to robustly execute dual arm grasping and manipulation tasks. Therefore, target objects and both hands are tracked alternately and a combined open- / closed-loop controller is used for positioning the hands with respect to the targets. The control framework for reactive positioning of both hands applying position based visual servoing fuses the sensor data streams coming from the vision system, the joint encoders, and the force/torque sensors.

Zero Force Control for Teaching of Grasp Poses

The humanoid robot is able to grasp objects with one or two hands. Therefore, the robot must know positions and approach directions for applying feasible grasps. A convenient way to teach such grasping poses is realized by employing the zero-force controller which uses the 6 DOF force-torque sensor at the wrist to react on 3 DOF forces and 3 DOF torques. To teach grasping poses, a human operator moves the hand toward an object and the system stores the object-hand relations in a database. During execution the approach and grasping positions can be used for positioning the hand via Visual Servoing.